Handy is a local voice-to-text desktop app by @cjpais that I’ve been contributing to for a few months. Push a key, speak, release, and whatever you said appears at the cursor. No cloud, no API key, no latency across the wire. The transcription is done by transcribe-rs, a Rust crate that wraps a handful of ASR engines (whisper, Parakeet, SenseVoice, Canary, openai) behind a unified interface.

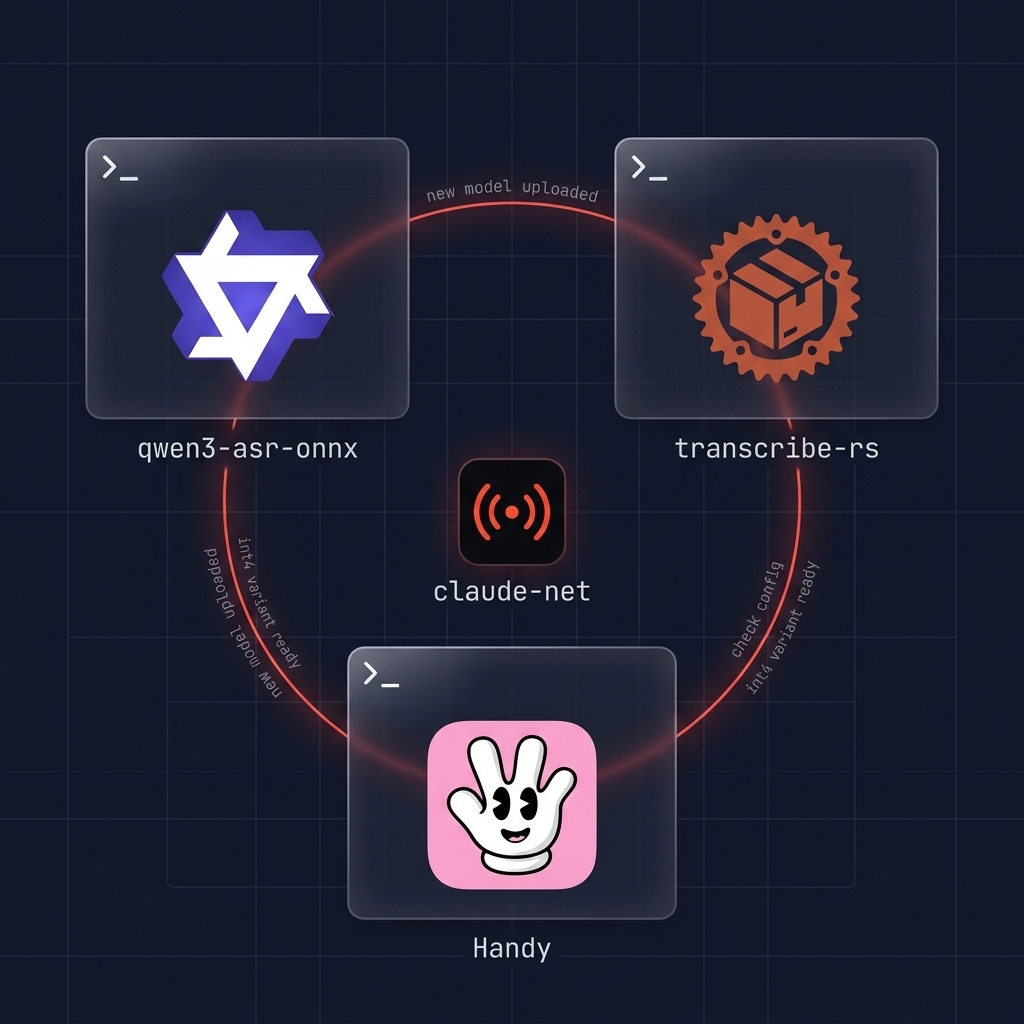

This is the story of adding a new engine to that line up, Alibaba’s Qwen3-ASR, and the ~90 numbered optimisation experiments that got it from 8 seconds of inference time down to 1.4 seconds on an 11s clip of JFK, while keeping WER within half a percentage point of the FP32 baseline. Alongside that, the integration work that had to happen in three different repos (qwen3-asr-onnx for the export pipeline, transcribe-rs for the Rust engine, Handy for the desktop app). And a fair bit about how much of the research was driven by Claude agents running in a loop while I slept.

Why Qwen3 at all

A big part of how I use Handy in practice is out and about. Sitting on a bench in the park, walking to the car, somewhere in a shared office, and I want to speak quietly without projecting, while the world keeps going around me. Birds, traffic, other people’s conversations, wind on the mic. Low-volume speech over a bed of background noise is the tough case, and the local models I had before Qwen3 didn’t really cope with it. Parakeet hears "thinking nothing" where I said "getting nothing". Whisper API occasionally hallucinated whole sentences ("Rest is on him" for "Where's the volume?"). GPT-4o-mini-transcribe was the best by a margin but that’s cloud, and cloud voice transcription is the thing I’m trying to get away from.

Qwen3-ASR 0.6B was the local model that actually got "volume" where Parakeet heard "all here". Proper punctuation and capitalisation too, which neither Parakeet nor most of the whisper variants give you out of the box. The catch was that it was ~10× slower than Parakeet even in FP32, 7.94s on an 11s clip (0.72x RTF). So the obvious question: can it be made fast enough to ship without giving up the accuracy that made it worth shipping in the first place?

The integration shape

Three repos have to stay in sync for a new engine to land:

- qwen3-asr-onnx: the Python export pipeline that takes Alibaba’s HuggingFace PyTorch release and produces ONNX files you can load from Rust. Also the home of the quantisation experiments.

- transcribe-rs: the Rust engine crate. New engine = new feature flag, new module implementing

TranscriptionEngine, new engine-specific options. - Handy: the Tauri desktop app. New engine = new model registry entries, new UI card, new download + extraction path, new TypeScript binding.

Alibaba released the PyTorch weights on HuggingFace (Qwen/Qwen3-ASR-0.6B and Qwen/Qwen3-ASR-1.7B) but no ONNX. So the very first job wasn’t optimisation at all, it was getting the model out of PyTorch and into an ONNX graph that ONNX Runtime could actually load. That turned out to be its own adventure.

A short list of what had to be figured out before the first successful export even ran:

- The encoder wrapper had wrong attribute names. The model itself came from the HuggingFace PyTorch release (Qwen/Qwen3-ASR-0.6B, Qwen/Qwen3-ASR-1.7B), but the Python wrapper code (the class that receives the loaded PyTorch model and exposes a traceable forward method) was initially patterned on antirez/qwen-asr, an MLX reimplementation. That wrapper referred to submodules as

conv1,conv2,conv3,embed_positions. The actual HuggingFace module usesconv2d1,conv2d2,conv2d3,positional_embedding.model.thinker.audio_tower.named_children()to the rescue. - Encoder layers wanted 2D inputs, not 3D.

(seq_len, embed_dim)plus acu_seqlenstensor, not(batch, seq_len, embed_dim). The attention layer internally doesseq_length, _ = hidden_states.size()which quietly breaks if you pass 3D.inspect.signature(layer.forward)was the diagnostic. - SDPA GQA ONNX export crashed. The HuggingFace SDPA path sets

enable_gqa=Truewhenever the module hasnum_key_value_groups, regardless of whether Q and KV actually have different head counts. The encoder has 14 Q heads and 14 KV heads (plain MHA), but the onnxscript SDPA converter assertsq_num_heads > kv_num_headsand blows up. Fix: force_attn_implementation = "eager"on each encoder layer before export. DynamicCacheisn’t traceable bytorch.export.transformers’ cache uses dynamic list growth andtorch.cat, neither of which the exporter can follow. And the API changed in 4.57+ (cache.key_cache[i]→cache.layers[i].keys), so the existing workaround code was broken anyway. Had to rewrite both decoder wrappers to iterate the transformer layers manually with stacked KV tensors.- Variadic args kill

dynamic_axes. When you’ve got 56 past-KV tensors, passing them as*past_key_values_flatbreaks the dynamic_axes→dynamic_shapes conversion. Fixed by stacking into a single[num_layers, batch, kv_heads, seq, head_dim]tensor instead.

Long story short, a few days of an agent hacking on the export, surfacing each new failure as it hit it, kicking the problem back to me only when it needed a judgement call. My actual attention on that phase was maybe a handful of minutes in total, checking in on progress, approving the next fix direction. The export pipeline from that work now lives in qwen3-asr-onnx/export.py and produces the split decoder (decoder_init.onnx + decoder_step.onnx + shared decoder_weights.data) that all subsequent experiments were run against. None of what follows in this post would have been possible without getting that first clean trace out.

For Handy and transcribe-rs the integration pattern already existed for other engines, so it was mostly a shape exercise: follow Parakeet’s layout, add int4/FP32 variants to the registry, wire the language setting through. That last bit tripped me up. Whisper/Canary/SenseVoice all had the user’s “Expected Language” setting flowing through to inference. Qwen3 was hardcoded to TranscribeOptions::default() which meant language=None. Qwen3’s auto-detection is genuinely excellent (better than most other local models I’ve used, which typically either guess wrong on the first token or require a language code up front), so this wasn’t broken so much as leaving a small accuracy bump on the table. Passing an explicit language hint when the user has one set nudges the WER down another fraction of a percent. Caught that one by code inspection, every arm in the transcription match block built a proper TranscribeOptions except the Qwen3 arm. 3 line fix but only obvious once I looked.

One thing that made the three-repo dance a fair bit easier: I had a separate Claude Code session running in each repo, and they could talk to each other over claude-net. That’s my MCP plugin + hub that lets Claude agents on the same LAN/Tailscale network send each other messages, form ad-hoc teams, or broadcast. So when the Handy agent needed the qwen3-asr-onnx agent to produce tar.gz archives in a particular layout, it’d just ping it directly rather than me having to be the courier. The agents pick up context in their own repo that I’d otherwise have to re-explain. Turns out that saves a surprising amount of prompt overhead when you’re juggling three code bases at once.

The “ology” bug

Early in integration, the int4 decoder would occasionally output only the text "ology." regardless of what audio had been recorded. Not hallucination in the traditional sense, it was a consistent fixed attractor. Clean audio, VAD detected speech, encoder output looked fine, decoder fell into the same degenerate trap.

The root cause turned out to be the prompt. Qwen3-ASR is a speech-conditioned LLM under the hood, and the prompt matters. The default prompt was basically "Transcribe the audio." and without any language conditioning, on very short utterances, the logits apparently had enough mass on the ology token to get stuck there. Adding a language hint ("Please transcribe the above English audio.") conditioned the model away from the attractor. Zero inference cost, the language token IDs get cached on first use.

Captured the two recordings that triggered it (handy-1774410810.wav, handy-1774410836.wav) as regression fixtures. Now those are permanent tests, if anyone removes the language hint the suite fails.

7.94s → 1.43s, one experiment at a time

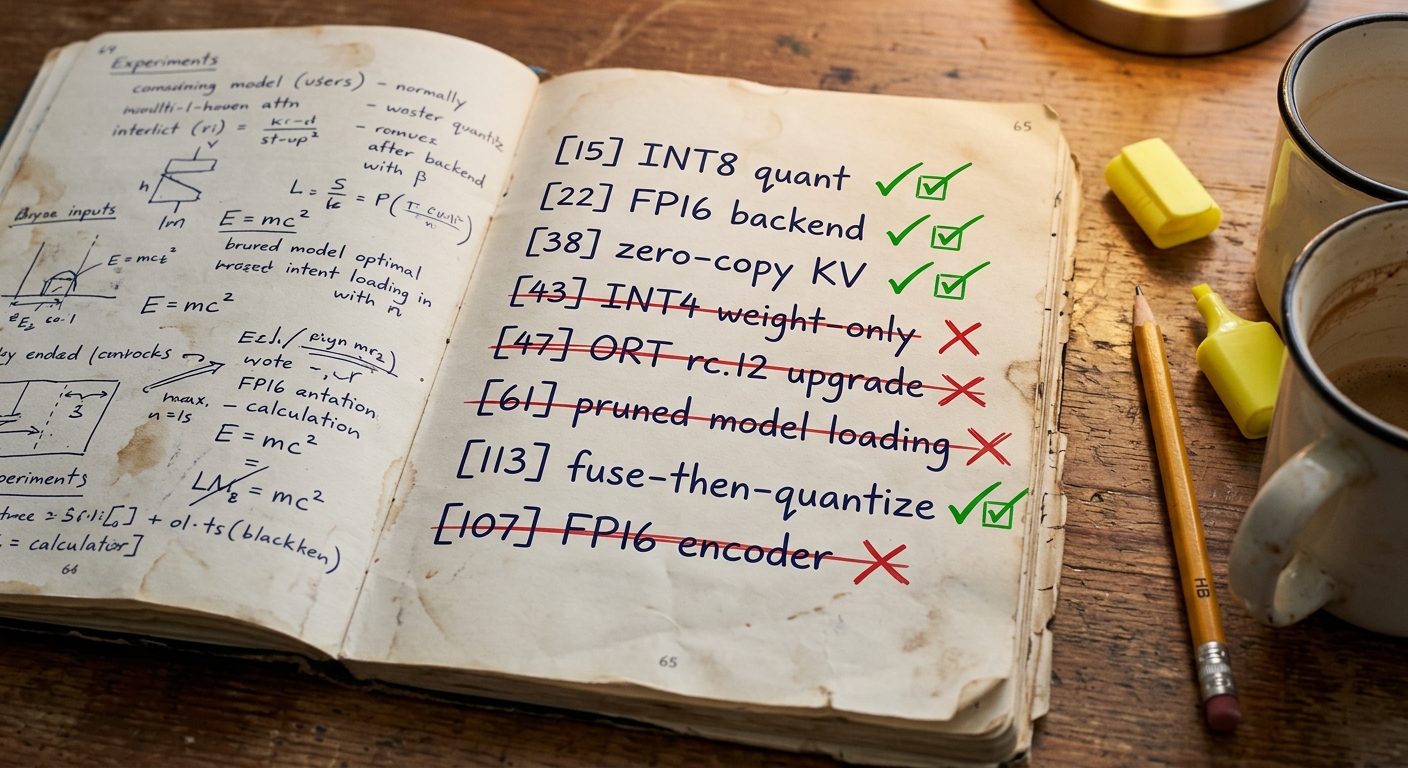

The optimisation work lives in qwen3-asr-onnx/INVESTIGATION.md, which currently runs to ~115 numbered experiments. Each one has a hypothesis, a measurement, and a verdict. The point of numbering them was to have a stable reference when later experiments referred back to earlier findings, and to not lose track of dead ends.

Worth spelling out the methodology, because it’s the thing that made getting to 115 experiments realistic at all. The shape was a long-horizon autonomous research loop, roughly:

- I’d seed the direction. Something like

"we're at 5.16% WER and 0.28x RTF on 0.6B int4, figure out what to try next." - An agent team goes off and brainstorms. Reads the recent quantisation literature, skims the ORT docs and source, looks at comparable open-source projects, comes back with a prioritised list of candidate experiments, each with rationale and expected upside. The list gets written as an ordered todo file in the repo.

- I skim the shortlist, strike anything that doesn’t make sense, approve the rest. This is the bit that takes me maybe 10 minutes.

- Then an experiment agent starts working through the todo list, one entry at a time. For each: implement it, run the benchmark (WER on 200 LibriSpeech samples plus RTF plus file size), write up a numbered entry in INVESTIGATION.md with hypothesis, approach, result, verdict. Commit the successful ones to git, revert the unsuccessful ones so the tree stays clean.

- Then sit with the result for a moment and think about it. Does this imply anything new to try? A follow-up variant, a combination with an earlier win, a sensitivity sweep? If so, add the new ideas to the bottom of the todo list. Move on to the next experiment.

That last step is what makes it a research loop rather than just an execution queue. Findings generate follow-up questions, follow-ups become new numbered experiments, the todo list grows and shrinks organically as the investigation unfolds. A handful of the branches in INVESTIGATION.md exist only because an earlier experiment surprised everyone and opened up a new direction to probe.

The point is that once it’s running, it runs with zero input from me. I’d kick off the loop in the morning, get on with something else entirely, come back in the evening to a commit history with a dozen or more new numbered experiments and a fatter INVESTIGATION.md. Some of the branches ran overnight unattended. The GPTQ disk-crash episodes I mentioned earlier were just the loop running autonomously until WSL’s VHD hit the C:\ ceiling and fell over, which is how you end up finding out that GPTQ leaves 6.5 GB of UUID-named temp files behind on every run.

A quick tour of what actually moved the needle, in order of impact:

[15] INT8 dynamic quantisation. The single biggest win. Offline quantize_dynamic() on the decoder, no calibration required. 3.26s → 1.71s. −47.5%. Output was token-identical except one comma replaced with a semicolon. This became the foundation, basically every later experiment was layered on top of INT8.

[6] Sequential ORT execution mode. Second biggest win. The autoregressive decoder is a linear chain of 28 transformer layers with nothing to schedule in parallel. ORT’s default parallel execution mode was just adding scheduling overhead. Flipping to sequential mode saved 17% (4.06s → 3.37s) with zero WER impact.

[21] INT8 encoder via MatMul-only quantisation. The encoder has 147 MatMul ops and 3 Conv ops. ORT has no ConvInteger support so you can’t straight-up INT8 the whole thing, but you can quantise just the MatMuls. Encoder dropped 717 MB → 197 MB, another 12.5% speedup.

[38] Zero-copy KV cache via DynValue pass-through. This one’s subtle. The default Rust ORT idiom for getting session outputs is .try_extract_array()?.to_owned().into_dyn() which copies. But SessionOutputs::remove() gives you an owned DynValue you can pass straight back in as the next input. ORT seems to skip internal copies when it sees the same DynValue round-tripping. Saves ~357 MB of memcpy over a 25-step decode. 7% speedup, pure free lunch.

[3] Split decoder preference over the unified variant. Load time got worse (~14s for two ONNX files) but inference was quicker because ORT could optimise the per-step graph independently.

[17] Vectorised argmax. Argmax over the logits was being done token-by-token via ndarray. Replacing with a raw slice iteration was only 1%, but free.

Between those five or six you’ve got most of the headline speedup. Then there’s a long tail of smaller wins and a longer tail of things that didn’t work.

The dead ends (which were actually the interesting bits)

Probably half the numbered experiments failed or regressed. That’s the point though, without running them I wouldn’t have known.

Unified INT8 decoder. When I first got the split decoder working I wanted to compare against a unified INT8 version. Unified came in at 3.18s vs split’s 1.54s. ORT cannot optimise a graph designed for arbitrary sequence lengths down to the efficient per-step case. Stayed split.

Per-channel INT8 quantisation. Textbook advice is per-channel over per-tensor for accuracy. But per-channel made it 14% slower on this graph with no measurable WER benefit. Per-tensor won.

FP16 encoder, weight-only [107]. Converting the encoder’s FP32 initialisers to FP16 and inserting Cast nodes. Catastrophic: 100% WER. The attention mask constant is -3.4e38 and that overflows FP16 (which tops out at ±65,504). The mask becomes -inf and the encoder output gets destroyed. Can’t fix without retraining.

FP16 encoder, native autocast [108]. Tried again with torch.amp.autocast('cpu', dtype=torch.float16) which keeps softmax and LayerNorm in FP32 and only does FP16 on the safe ops. Correctness was fine (5.13% WER, within noise of baseline) but on native Windows it ran 8.7% slower than FP32. Cast nodes at the boundary prevented op fusion. FP32 encoder stays on CPU, the FP16 version is kept around for GPU targets.

MatMulNBits INT4 decoder, early attempt [43]. INT4 weight-only was 1.97s vs INT8’s 1.64s. The dequantisation overhead on each matmul exceeded the memory bandwidth savings. Looked like a bust. Turned out it wasn’t, see accuracy_level below.

Per-layer KV cache decoder export [41]. 43% of decoder time was Concat and Split ops on the KV cache. Profiler showed this clearly. I figured if I re-exported with per-layer KV inputs (past_key_0…past_key_27) instead of stacked tensors, those ops would vanish. They did, but the PyTorch tracer for variadic inputs generated 7203 nodes where the stacked version had 2266. Ended up 40% slower per step. The JIT won.

ORT rc.12 upgrade [47]. Tried bumping the Rust ORT crate from rc.10 to rc.12 (ORT 1.24.2). Degraded to 2.88s vs 1.54s. Pyke’s 1.24.2 binary is meaningfully slower than pip’s 1.24.3 for some reason. Reverted.

Intra-op thread spinning disabled [19]. Thread spin-waiting between autoregressive steps looked wasteful, I figured disabling it would let the CPU idle. Result: 3.28s vs 1.72s. Spinning is critical for low-latency wakeup on a per-token loop. Leave it on.

Selective layer exclusion from INT4 [114]. Literature says first and last layers are sensitive to quantisation, so keeping those + lm_head at FP32 should recover accuracy. It did, 4.96% WER vs 5.16%. But 25% slower (FP32 ops are slow in an otherwise int4 graph), and three short utterances produced "ology" outputs (the same degenerate failure mode from earlier, now triggered by FP32/int4 boundary distribution mismatch). Not worth shipping.

Three breakthroughs that weren’t obvious

These are the ones I didn’t expect to find.

AWQ smoothing alpha = 0.2 [68]–[88]. AWQ (Activation-aware Weight Quantisation) scales weight rows by a learned smoothing factor before quantisation, trading weight precision for activation precision. There’s an alpha parameter between 0 and 1. The paper’s recommendation for LLMs is usually 0.5. I ran a sweep from 0.1 to 1.0 on the 0.6B model:

| α | WER (200 samples) |

|---|---|

| 0 (no smoothing) | 6.75% |

| 0.2 | 5.21% |

| 0.5 | 5.62% |

| 0.8 | 6.04% |

0.2 beat the “recommended” default meaningfully. This model is apparently less activation-outlier-dominated than larger LLMs. The calibration activations get cached so the sweep is cheap to re-run, awq_smooth.py --alpha 0.2 --activations-cache calibration.npz. That cache was probably the most reused 2 GB file on my laptop that month.

accuracy_level=4 [104]. ORT’s MatMulNBitsQuantizer for INT4 has an accuracy_level parameter. Default is 0, max is 4. Intuitively higher accuracy_level = slower + more accurate. But on x86 with AVX-512 it actually selects a different accumulation kernel which is both faster and more accurate:

| accuracy_level | RTF | WER |

|---|---|---|

| default | 0.26x | 5.28% |

| 4 | 0.15x | 5.18% |

Free pareto improvement. I added this to every int4 export I’ve done since. Wouldn’t have found it without running the sweep, and it wasn’t obvious from reading the ORT docs which are pretty terse on what the levels actually select at runtime.

Fuse-then-quantise [113]. ORT has a transformer optimiser that fuses decomposed RMSNorm into SimplifiedLayerNormalization, SkipLayerNorm, BiasGelu, that sort of thing. On FP32 decoders it gives a ~7% speedup. When I tried it on int4 decoders (running the fusion after quantisation) I got a +0.59pp WER regression, the rounding in the fused kernels compounded with the int4 quantisation error and flipped tokens at decision boundaries. Looked like fusion and int4 just weren’t compatible.

Reversed the order. Fuse the FP32 graph first, then quantise the already-fused model. WER back to baseline (actually marginally better at 5.13% vs 5.16%), and the 4.2% speedup from fusion came along for the ride. The lesson: RTN calibrates against whatever graph you give it, so give it the graph you actually want to run.

Combining those three, 0.6B int4 went from 5.16% WER at 0.28x RTF to 5.13% WER at 0.22x RTF. 24% faster than where the int4 pipeline started, with no quality penalty.

What the 1.7B got

The 0.6B’s results were decent but the 1.7B is where Qwen3 really pulls away from Parakeet on accuracy. FP32 WER on 200-sample LibriSpeech test-other: Parakeet INT8 sits at 5.45%, Qwen3 0.6B FP32 at 4.42%, and Qwen3 1.7B FP32 at 3.79%. The question was whether the 1.7B could be quantised aggressively enough to be shippable on consumer hardware.

Naive AWQ INT8 α=0.2 on 1.7B was a disaster at 9.04% WER. Worse than Parakeet. Straight RTN int4 was better (4.33%) but ran at 0.56x RTF which is getting slow for anyone without a recent CPU.

The winner was a mix: GPTQ on decoder_init, RTN on decoder_step, both with accuracy_level=4. GPTQ (a proper calibration-based layer-wise quantisation) takes about 20 minutes to run and captures calibration activations from a bunch of LibriSpeech samples. It works on the larger model because decoder_init has more capacity to absorb quantisation-aware weight redistribution. RTN on decoder_step was fine, the autoregressive step is small enough not to need GPTQ’s sophistication.

Final 1.7B: 4.25% WER, 0.34x RTF, 4.3 GB. Interestingly GPTQ hurt the 0.6B model (6.01% WER vs 5.16% for RTN-only). Smaller models don’t have enough capacity to benefit from layer-wise reconstruction and start overfitting to the calibration set. Different optimisation for each size class.

The 1.44 second mirage

Somewhere around experiment [38] the benchmarks started reporting 1.43–1.44s. That number ended up in my memory notes, in internal PR summaries, in the Handy model card draft. It was the steady state in my head.

When I later started doing weight-sharing experiments ([109]) I wanted controlled before/after benchmarks, so I set up git worktrees and ran 10 back-to-back measurements on both versions. Neither one reproduced 1.44s, both settled at ~2.07s on the same hardware.

Long story short, the 1.44s was a transient measurement artefact. Probably a warmup effect with only 1 or 2 runs, or different CPU load conditions, or an ORT version that got bumped and I didn’t notice. The controlled benchmarks (2 warmup + 5 timed) give ~1.43–1.5s on native Windows, and ~2.0s in WSL where ORT’s Linux binary is 2–4× slower than Windows for reasons I haven’t dug into yet.

The lesson, which I keep re-learning: single-shot measurements on autoregressive inference are insanely noisy. Variable per-step times, variable thermal conditions, whatever the OS is doing in the background. Everything needs a warmup phase and a sample size now.

ORT thread count as a shippable feature

Parallel to the optimisation work, something that kept biting: ORT’s default thread count is “all logical cores” and that’s frequently not optimal for autoregressive decode. On my Ryzen AI 7 PRO 350 (8 physical, 16 logical), 6 threads gave 7.1× realtime where 16 threads gave 6.7×. Cache contention and scheduler overhead apparently eat the parallelism beyond the physical core count.

So we added two things to transcribe-rs and Handy:

- A manual override:

set_ort_intra_threads()/get_ort_intra_threads()in transcribe-rs. Global atomic, read bybuild_session()if the caller hasn’t set something explicit. Handy exposes it as a slider in Advanced Settings. - An auto-tune button: benchmark a coarse grid (1, 2, 4, 6, 8, 12, 16, 20, 24, 32) using real recordings from the user’s history, show the RTF table, pick the winner. About 1–2 minutes to run because each trial needs a full model reload.

The auto-tune work surfaced a nice race condition. The benchmark rapidly cycles load/unload to test each thread count. I used a LoadingGuard to prevent concurrent loads. But the idle-timeout watcher that unloads models after inactivity didn’t check the guard, it just unloaded unconditionally. During a benchmark, the watcher would occasionally fire between a reload and the next transcription, killing the run. Fixed by adding an is_loading check at the top of unload_model(). Stress testing with benchmarks surfaces race conditions that normal usage never would.

What this project added

Alibaba’s contribution was the model itself: architecture, training, tokenizer, PyTorch weights. That’s the big one and none of the rest matters without it. What this project built on top, starting from those weights:

- Working ONNX export pipeline. Non-trivial. Five separate export-time issues to solve before the first trace even ran (see above), plus the decoder had to be split into prefill + step for any hope of per-token efficiency.

Then the performance work, which is what the bulk of this post has been about:

- Split decoder preference (init + step), roughly 2× inference speedup

- Sequential ORT execution mode tuning, 17% saved on the autoregressive loop

- INT8 dynamic quantisation pipeline, 47% off baseline

- MatMul-only INT8 encoder, 72% file size reduction, 12% speed

- Zero-copy KV cache via DynValue, 7% saved, ~357 MB of memcpy avoided per 25-step decode

- ORT transformer optimiser graph fusion applied before quantisation, 4–7% speed

- accuracy_level=4 kernel selection, faster and more accurate

- AWQ alpha sweep, 0.2 is optimal for this model (vs the typical 0.5 default)

- GPTQ calibration pipeline for the 1.7B, 0.08pp WER improvement over RTN alone

- Weight sharing for int4, 234 MB off 0.6B, 937 MB off 1.7B via hash-based dedup

- Thread autotuning, 6 threads beats 16 on Zen 5

- Benchmark-driven iteration, 114 numbered experiments with WER + RTF for each

Final numbers for the 0.6B recommended variant: 5.13% WER, 0.22x RTF, 2.1 GB tar.gz on native Windows. Parakeet INT8 for comparison: 5.45% WER, 0.16x RTF. Same speed class, better WER, full punctuation and capitalisation (Parakeet has neither).

For the 1.7B: 4.25% WER, 0.34x RTF, 4.3 GB. Roughly 2× slower than Parakeet but the WER gap is over 1 full percentage point which is enormous at this quality level.

LLM autonomy in this

Worth being honest about: a big chunk of this work was run by Claude agents, and not just the execution. The research and brainstorming were too.

The typical pattern: I’d seed a direction to an agent team along the lines of "we're at 5.16% WER and 0.28x RTF on 0.6B int4. What haven't we tried? Go look at the recent Qwen3 quantisation literature, the ORT optimiser docs, any other open-source projects doing int4 MatMulNBits, and come back with a shortlist of candidate experiments with rationale and expected upside." Then a research subagent or two would go away, read papers, skim GitHub, put together a prioritised list with their reasoning, and drop it back into the session. I’d skim that, pick which ones felt worth spending disk and compute on, and the next round of agents would run the trials.

So the accuracy_level=4 finding, the AWQ alpha sweep, the fuse-then-quantise ordering, the selective layer exclusion idea, the GPTQ-for-large-not-small split, all of those came out of that research loop. I was not the one reading the papers. I was the one reviewing the shortlists and asking “ok why would that work, what would we expect to see” before approving the runs.

The three top-level sessions (one per repo) stayed up for weeks and coordinated over claude-net as I described earlier. A typical beat: the Handy session hits a bug where int4 variants aren’t showing up in the model picker, pings the transcribe-rs session "can you check if the Qwen3 engine is actually advertising int4 in available_quantizations()?", that session does the code read and replies with a diff suggestion, Handy applies it. Or the other way around: the qwen3-asr-onnx session finishes a quantisation run, broadcasts "new model uploaded, SHAs attached" into the shared team channel, and both the transcribe-rs and Handy sessions pick it up and update their model registry entries. You don’t get this neatly from a single session trying to hold three repos in its head. Each session has its own local context, its own CLAUDE.md, its own git state. They just talk when they need to.

The division of labour settled pretty naturally. Agents are great at reading a pile of papers, running the export pipeline, running calibration, running the 200-sample WER eval, updating INVESTIGATION.md, cleaning up temp files, and relaying coordination messages between repos. I’m needed for picking which directions are actually worth pursuing, noticing a result looks suspicious (the 1.44s mirage, or "hang on, is this int4 really using FP16 encoder like the config claims?"), sanity-checking the methodology, and making the resource/priority calls. The agent can verify the FP16 claim in 30 seconds but it won’t ask the question, and it generally won’t push back on its own earlier conclusions without prompting.

With that shape of collaboration in place I was genuinely more ambitious about what to attempt than I would have been doing this solo. I wouldn’t have bothered with a 10-point AWQ alpha sweep, or a 90-experiment quantisation investigation, or daily GPU-backend evals, if I’d had to babysit each run. With a research agent surfacing options and a trial agent running the matrix, it’s just “here’s the shortlist, which ones do we approve, tell me what wins.”

Where it’s at now

Shipped to Handy on the feat/qwen3-batch branch, PR cjpais#48, rebased onto v0.3.2+whisper-cuda as of March 18. Both 0.6B and 1.7B int4 variants are on HuggingFace at andrewleech/qwen3-asr-0.6b-onnx and andrewleech/qwen3-asr-1.7b-onnx. The auto-tune thread count feature landed in the same PR and surprised me by how obvious-in-hindsight it felt as a user feature once it shipped.

Open questions I haven’t got to yet:

- FP16 on GPU. FP32 encoder is the CPU winner but on GPU hardware the FP16 variant should actually pay off. Haven’t built + benchmarked a WebGPU or DirectML variant properly yet. Handy has WebGPU support wired in via a separate branch.

- Voxtral. 3B/4B, newer architecture. Probably the next engine to integrate once GPU backend maturity catches up.

- Whether the per-step embedding lookup in the v3 hybrid decoder format becomes a memory bandwidth bottleneck for very long transcriptions. Current measurements are on 11s and 35s clips, 5-minute meeting transcripts might tell a different story.

That being said, the thing works, the WER is good, it’s fast enough to use in anger, and the notes I mumble at my laptop on a park bench actually come out legible now. Which was the whole point.